ESG Position Note No. 4 | AI as Infrastructure of the Twin Transition

26 February 2026 | Frankfurt am Main

Edited by Sonia Artuso in collaboration with the Osservatorio ESG AIAF “A. Gasperini”, Teresa Royo Luesma, Jean-Philippe Desmartin, Frank Klein, Bozsik Balázs and the other members of EFFAS Commission on ESG – EFFAS CESG.

What Do We Mean by AI in the Context of ESG?

Within the European strategic and regulatory framework, artificial intelligence (AI) is increasingly recognised not as a standalone technology, but as an enabling infrastructure that shapes economic models, corporate governance, financial decision-making, risk management, and sustainability performance. AI refers to a broad set of technologies, including predictive, generative and agentic systems, capable of processing large-scale datasets, identifying patterns, and supporting or automating decision-making across value chains. In the ESG context, AI plays a dual and interdependent role:

- as an enabler of sustainability, improving efficiency, transparency, risk assessment and climate-related decision-making,

- as an object of sustainability assessment, given its impacts on energy consumption, data governance, social outcomes, and fundamental rights.

This dual nature places AI at the core of the European twin transition[1], where digital transformation and sustainability objectives must progress jointly and coherently, rather than sequentially.

[1] EC, Green digital sector, https://digital-strategy.ec.europa.eu/en/policies/green-digital, last access 12.30.2025

AI, Polycrisis and Systemic Risk

The World Economic Forum describes the current global context as a polycrisis: interconnected environmental, geopolitical, technological, and social crises generating cascading risks for markets, institutions, and societies. AI both mitigates and amplifies this systemic dimension. On the one hand, AI strengthens predictive capacity, resilience and adaptive responses, including climate modelling, infrastructure optimisation and risk forecasting. On the other hand, it accelerates structural vulnerabilities, notably:

- large-scale disinformation dynamics,

- cyber and data-security risks,

- technological dependencies,

- and resource pressures linked to digital infrastructure and data centres.

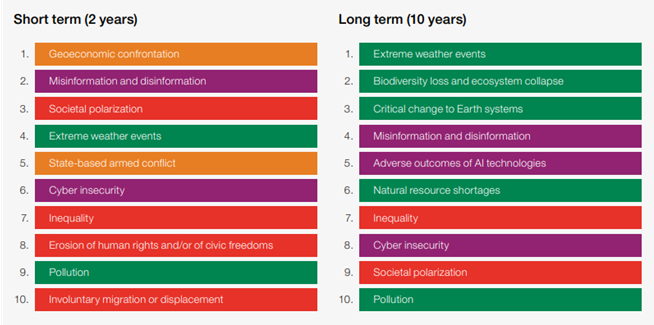

As highlighted in recent editions of the WEF Global Risks Report 2026[1], cyber risks and AI-driven disinformation rank among the most severe short- and medium-term threats, while environmental risks dominate the long-term horizon (Fig.1). In this context, AI governance becomes inseparable from ESG risk management.

Fig. 1. Global risks ranked by severity, short term (2 years) and long term (10 years)

[1] WEF, Global Risks Report 2026, https://reports.weforum.org/docs/WEF_Global_Risks_Report_2026.pdf, last access 25.01.2026

AI as a Lever of Sustainable Competitiveness

The European Union has reframed AI not merely as a driver of productivity, but as a strategic infrastructure for long-term competitiveness. Productivity gains enabled by AI in ESG reporting, data collection and process automation may allow investors and analysts to reallocate resources towards higher value-added activities, such as fundamental analysis, methodological development and client engagement, strengthening the strategic dimension of investment decision-making.

This approach aligns with the pillars outlined in the Draghi Report[1] on European competitiveness, innovation, security, and decarbonization, and is operationalised through an integrated policy architecture:

- the Clean Industrial Deal[2], embedding AI into industrial decarbonisation and energy efficiency pathways

- the Digital Decade 2030[3], linking AI deployment to skills, inclusion, and secure digital infrastructure

- the AI Continent Action Plan (2025)[4], positioning AI as a pillar of technological sovereignty.

Under this framework, the EU plans to mobilise over €200 billion by 2030, including the development of a network of 13–19 AI Factories and up to 5 AI Gigafactories, aimed at strengthening Europe’s computational capacity, supporting industrial transformation, and enabling scalable ESG solutions. AI is therefore increasingly treated as cognitive infrastructure of the twin transition, rather than as a purely market-driven technology.

[1] EC, The Draghi Report – A competitiveness strategy for Europe, 2025, https://tinyurl.com/y4p74dd5, last access 12.30.2025.

[2] EC, COM (2025) 85 – EU Clean Industrial Deal, 2025, https://eur-lex.europa.eu/legal-content/EN/TXT/?uri=CELEX%3A52025DC0085, last access, 12.30.2025.

[3] EU, 2030 Digital Compass: the European way for the Digital Decade, 2021, https://eur-lex.europa.eu/legal-content/EN/TXT/PDF/?uri=CELEX:52021DC0118, last access 12.30.2025.

[4] EC, EUAI Continent Action Plan https://digital-strategy.ec.europa.eu/en/library/ai-continent-action-plan, last access 12.30.2025.

Policy and Regulatory Drivers

The EU has developed a coherent and interconnected regulatory architecture to govern AI in alignment with sustainability objectives. Key elements include:

- the EU Artificial Intelligence Act[1], establishing a risk-based framework that links AI use to governance obligations, transparency requirements, human oversight and the protection of fundamental rights,

- the Corporate Sustainability Reporting Directive (CSRD) and the European Sustainability Reporting Standards (ESRS), which increasingly intersect with AI-enabled data systems, controls and decision-support tools,

- digital governance frameworks addressing cybersecurity, accountability and inclusion,

- the EU sustainable finance framework, including the EU Taxonomy and disclosure regulations, which rely on data integrity, comparability and forward-looking metrics, areas where AI plays a growing operational role.

Together, these instruments shift AI governance from voluntary ethical principles to binding strategic responsibility, embedding it within corporate strategy, board oversight and financial reporting.

[1] EC, Regulation (EU) 2024/1689 on artificial intelligence (EU AI Act), 2024, https://eur-lex.europa.eu/legal-content/IT/TXT/HTML/?uri=OJ:L_202401689, last access 12.30.2025.

Governance, Accountability and Risk Management

The adoption of AI has direct implications for corporate governance and fiduciary responsibility. Beyond well-established concerns such as algorithmic bias, transparency and explainability, organisations are increasingly required to address:

- duty of care, involving the anticipation and mitigation of reasonably foreseeable AI-related risks,

- board accountability, as responsibility for AI deployment cannot be delegated solely to technical functions or external providers,

- the integration of AI-related risks into enterprise risk management frameworks.

AI also introduces forms of uncertainty that cannot be fully quantified ex ante. This reinforces the need for strategic foresight, understood as the capacity to anticipate scenarios, identify emerging risks and govern long-term uncertainty.

Data Governance and Trust

Data constitute both the foundation and the primary vulnerability of AI systems.

Without robust:

- data quality controls,

- cybersecurity safeguards,

- effective data protection measures

- sound governance and access frameworks,

Trustworthy AI cannot exist.

As AI-related errors and hallucinations are unlikely to disappear, the role of advanced human expertise remains essential to validate output, exercise professional judgement and manage AI-related risks. This reinforces the strategic relevance of advanced digital and analytical skills, in line with the Digital Decade 2030 objective of strengthening Europe’s pool of highly qualified ICT and AI professionals.

As AI increasingly underpins ESG metrics, disclosures and decision-making processes, failures in data governance may translate directly into financial, regulatory and reputational risks. Data trust therefore represents a structural condition for ESG credibility and organisational resilience.

Climate: The Ultimate Stress Test of AI Sustainability

Climate change is the domain in which AI’s sustainability credentials become fully measurable and assessable. AI supports the translation of climate science into operational and financial insights, including:

- physical and transition risk assessment,

- scenario analysis,

- portfolio-level impact evaluation.

At the same time, AI is not environmentally neutral. Digital infrastructures and data centres already account for a growing share of global electricity consumption, with projections indicating significant increases under high-growth scenarios. Climate therefore functions as the ultimate stress test of the twin transition: AI must accelerate mitigation, adaptation and resilience, while its own environmental footprint must remain compatible with planetary boundaries[1], including constraints related to water stress and critical raw materials[2] along the AI value chain.

This long-term environmental dominance, as consistently highlighted by global risk assessments, reinforces the need to evaluate AI not only as a short-term efficiency tool, but as a structural component of long-term ESG resilience.

[1] Rockström J. et al., Planetary Boundaries: Exploring the Safe Operating Space for Humanity, 2009; updated framework in Stockholm Resilience Centre, Planetary Boundaries, 2015–2023, https://www.stockholmresilience.org/research/planetary-boundaries.html, last access 04.02.2026

[2] International Energy Agency (IEA), Global Critical Minerals Outlook 2025, https://iea.blob.core.windows.net/assets/ef5e9b70-3374-4caa-ba9d-19c72253bfc4/GlobalCriticalMineralsOutlook2025.pdf, last access 04.02.2026

Social Dimension, Rights and Skills

AI sustainability is inherently social. Key challenges include:

- algorithmic bias and discrimination,

- surveillance and erosion of autonomy,

- labour transformation and skills mismatches,

- unequal access to digital benefits.

The social implications of AI in terms of job creation and job displacement remain the subject of an active debate. According to the WEF’s Future of Jobs Report 2025[1], technological and digital transformation is expected to affect around 22% of current employment by 2030, with approximately 170 million new jobs created and 92 million roles displaced globally, resulting in net job creation alongside a significant reallocation of roles and skills across sectors.

A central concept is AI literacy: the ability of individuals, not only developers, to understand when AI systems are used, how they influence decisions, and what their limits are. AI literacy is essential for inclusion, accountability, and trust. An effective AI transition must therefore be accompanied by investment in skills, reskilling, and inclusive governance models.

[1] World Economic Forum, The Future of Jobs Report 2025, 2025, https://www.weforum.org/publications/the-future-of-jobs-report-2025/, last access 03.02.2026.

From principles to practice: key questions for AI–ESG governance

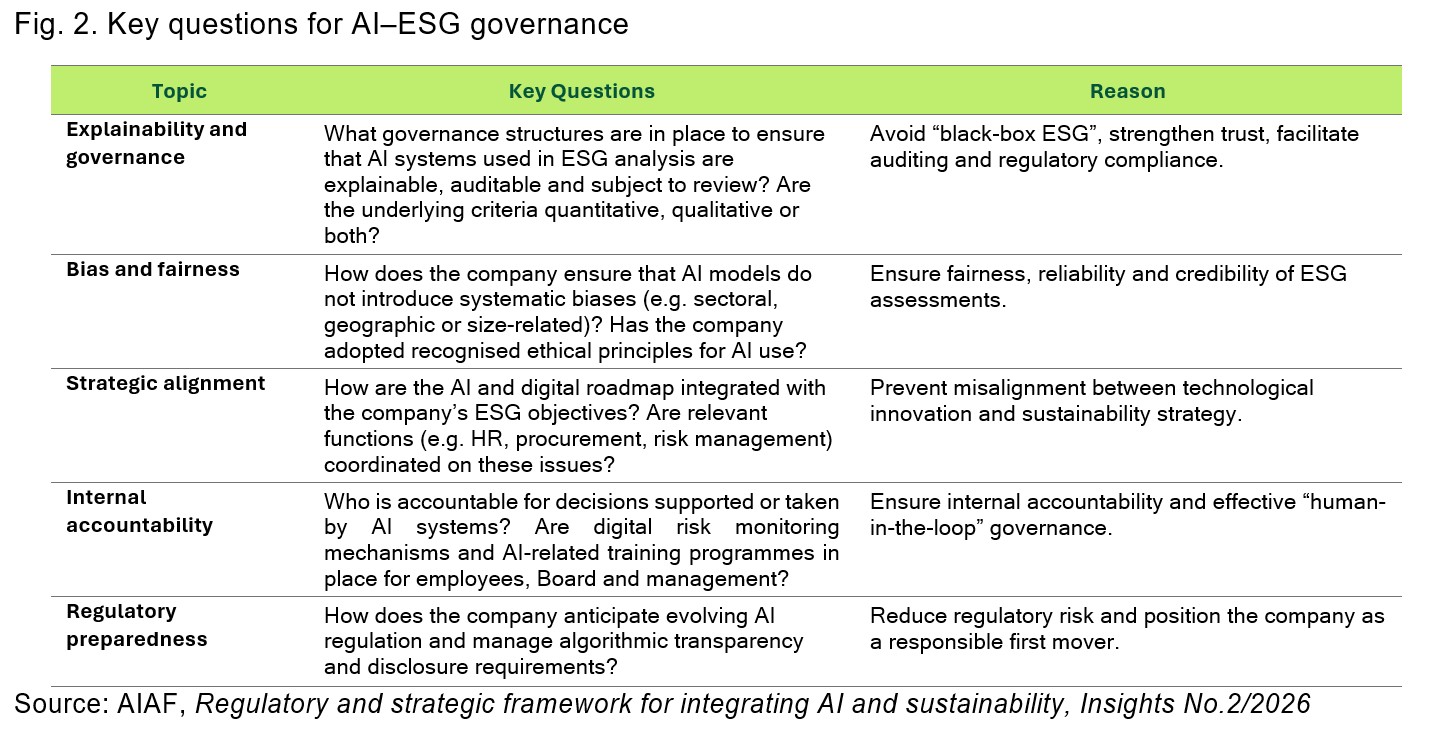

The growing integration of AI into financial analysis and sustainability assessment calls for governance approaches that are not only compliant, but also responsible, transparent and human-centred.

In this context, the Hamburg Declaration on Responsible AI[1], developed through a multi-stakeholder international process, involving policymakers, academia, business and civil society provides a shared reference framework grounded in accountability, explainability, human oversight and societal trust.

The Declaration explicitly aligns responsible AI development with the United Nations Sustainable Development Goals (UN SDGs), emphasising the role of AI as an enabler of inclusive, sustainable and long-term value creation. Building on these principles, and drawing on the experience of analysts and investors, the following questions (Fig. 2) aim to support a structured and decision-useful assessment of how companies govern the interaction between AI and ESG.

[1] Hamburg Declaration on Responsible Artificial Intelligence, adopted through an international multi-stakeholder initiative involving policymakers, academia, business and civil society, promoting responsible, human-centred AI aligned with the United Nations Sustainable Development Goals, 2023, https://www.undp.org/south-africa/publications/hamburg-declaration-responsible-artificial-intelligence, last access 03.02.2026.

Conclusion

In the context of the evolving European sustainable finance and digital framework, combining regulatory initiatives such as the revision of SFDR 2.0[1] the AI Act and the Omnibus simplification package with a broader strategic agenda focused on competitiveness, technological sovereignty and the twin transition, regulatory emphasis is shifting from disclosure volume to data quality, comparability and product categorization.

Within this framework, AI should be regarded as an enabling infrastructure rather than a standalone solution. Consistent with the EFRAG work on financial–sustainability connectivity[2], the emerging European regulatory framework for ESG ratings[3], and initiatives such as the European Single Access Point (ESAP) and the Omnibus simplification package, AI’s potential contribution lies in improving the consistency, traceability and connectivity of sustainability information at source, enabling ESG data and ratings to rely on a more structured, strategy-linked and forward-looking information base.

AI and ESG are no longer parallel agendas. They are mutually reinforcing imperatives. Artificial intelligence can accelerate sustainability, enhance resilience, and strengthen Europe’s competitiveness, but only if embedded within robust governance frameworks, credible regulation, and inclusive social strategies.

In an increasingly fragmented and competitive global environment, the credibility of AI governance becomes a decisive factor for long-term value creation and investor confidence. The convergence of AI and ESG increasingly shapes capital market dynamics. Investors and analysts are progressively assessing:

- the quality of AI governance,

- the credibility of AI-related risk management,

- the alignment between AI deployment and long-term sustainability objectives.

Companies that demonstrate transparent, measurable, and well-governed AI use are better positioned to attract long-term capital. In this sense, AI becomes not only a driver of efficiency, but a multiplier of trust across investors, regulators, and stakeholders.

EFFAS and its ESG Commission remain committed to supporting the financial community through analysis, training, and thought leadership. By framing AI as an infrastructure of the twin transition, this Position Note aims to equip financial professionals with a coherent framework to assess risks, opportunities, and long-term value creation in an AI-driven European economy.

[1] EC, Proposal for the revision of Regulation (EU) 2019/2088 on sustainability-related disclosures in the financial services sector (SFDR 2.0), 2025, https://eur-lex.europa.eu/legal-content/EN/TXT/?uri=CELEX:52025PC0841, last access 03.02.2026.

[2] EFRAG, Connectivity between financial statements and sustainability reporting – User perspective (Consultation paper), 2025, https://www.efrag.org/en/news-and-calendar/news/efrag-publishes-discussion-paper-on-connectivity-of-financial-and-sustainability-reporting, last access 03.02.2026.

[3] EC, Regulation (EU) 2024/3005 on the transparency and integrity of ESG rating activities (ESG Ratings Regulation), 2024, https://eur-lex.europa.eu/legal-content/EN/TXT/?uri=CELEX%3A32024R3005, last access 03.02.2026.

About the EFFAS Commission on ESG

The EFFAS CESG was established in October 2007 to facilitate the integration of ESG factors into investment processes.

Composed of investment professionals from leading European and global sell-side and buy-side firms, including fund managers, financial analysts, and equity specialists, the CESG has been mandated by EFFAS to achieve several objectives.

These include establishing and coordinating EFFAS’s position on ESG reporting, measurement, and valuation; consolidating ESG expertise among European investment professionals; extending ESG efforts beyond individual EFFAS member companies; engaging in policy, academic and industry initiatives on ESG issues; organizing European-level conferences on ESG issues; and representing EFFAS in international conferences and projects related to ESG issues.

CESG serves as a reference and networking Centre for ESG integration efforts of investment professionals across Europe.

About EFFAS

EFFAS is a not-for-profit organisation set up in 1962 with 15 national member associations in Europe, representing more than 18,000 financial analysts, asset managers, pension fund managers, corporate finance specialists, risk managers, treasurers among many other professional profiles from the investment profession. EFFAS is a leading certification body with over 27,000 certificate holders worldwide, offering prestigious designations such as the Certified European Financial Analyst by EFFAS, the EFFAS Certified ESG Analyst® (CESGA), and the EFFAS Climate Risk Analyst® (ECRA).

For any further information, please contact:

Álvaro Wagener Díez | Marketing & Communications Manager

E-mail: a.wagener@effas.com | Phone Number: +49 69 98959519